Ontology Similarity Measuring and Ontology Mapping Algorithms Based on Fused Lasso Signal Approximator

Yun Gao1, Wei Gao2,*

1Department of Editorial, Yunnan Normal University, Kunming, China

2School of Information Science and Technology, Yunnan Normal University, Kunming, China

Abstract

Ontology similarity calculation is important research topics in information retrieval and widely used in science and engineering. By analyzing the technology of fused lasso signal approximator, we propose the new algorithm for ontology similarity measure and ontology mapping. Via the ontology sparse vector learning, the ontology graph is mapped into a line consists of real numbers. The similarity between two concepts then can be measured by comparing the difference between their corresponding real numbers. The experiment results show that the proposed new algorithm has high accuracy and efficiency on ontology similarity calculation and ontology mapping.

Keywords

Ontology, Similarity Measure, Ontology Mapping, Sparse Vector, Fused Lasso Signal Approximator

Received: April 2, 2015 / Accepted: April 17, 2015 / Published online: May 8, 2015

@ 2015 The Authors. Published by American Institute of Science. This Open Access article is under the CC BY-NC license. http://creativecommons.org/licenses/by-nc/4.0/

1. Introduction

As a conceptual shared and knowledge representation model, ontology has been used in knowledge management, image retrieval and information retrieval search extension. Furthermore, acted as an effective concept semantic model, ontology is employed in other fields except computer science, including medical science, social science, pharmacology science, geography science and biology science (see Przydzial et al., [1], Koehler et al., [2], Ivanovic and Budimac [3], Hristoskova et al., [4], and Kabir et al., [5] for more detail).

The ontology model is a graph G = (V,E) such that each vertex v expresses a concept and each directed edge e=vivj denote a relationship between concepts vi and vj. The aim of ontology similarity measure is to get a similarity function Sim: V×V ![]()

![]()

![]() {0} such that each pair of vertices is mapped to a non-negative real number. Moreover, the aim of ontology mapping is to obtain the link between two or more ontologies. In more applications, the key of ontology mapping is to get a similarity function S to determine the similarity between vertices from different ontologies.

{0} such that each pair of vertices is mapped to a non-negative real number. Moreover, the aim of ontology mapping is to obtain the link between two or more ontologies. In more applications, the key of ontology mapping is to get a similarity function S to determine the similarity between vertices from different ontologies.

In recent years, ontology similarity-based technologies were employed in many applications. By virtue of technology for stable semantic measurement, a graph derivation representation based trick for stable semantic measurement is presented by Ma et al., [6]. Li et al., [7] determined an ontology representation method which can be used in online shopping customer knowledge with enterprise information. A creative ontology matching system is proposed by Santodomingo et al., [8] such that the complex correspondences are deduced by processing expert knowledge with external domain ontologies and in view of novel matching technologies. The main features of the food ontology and several examples of application for traceability aims were reported by Pizzuti et al., [9]. Lasierra et al., [10] pointed out that ontologies can be employed in designing an architecture for taking care of patients at home. More ontology learning algorithms can refer to [11-22].

In this paper, we present the new ontology similarity computation and ontology mapping algorithms relied on sparse vector learning and fused lasso signal approximator. In terms of the sparse vector, the ontology graph is mapped into a real line and vertices are mapped into real numbers. Then the similarity between vertices is measured by the difference between their corresponding real numbers.

2. Basic Idea

Let V be a instance space. For any vertex in ontology graph G, its information (including its attribute, instance, structure, name and semantic information of the concept which is corresponding to the vertex and that is contained in its vector) is denoted by a vector with p dimension. Let v=![]() be a vector which is corresponding to a vertex v. For facilitating the expression, we slightly confuse the notations and denote v both the ontology vertex and its corresponding vector. The purpose of ontology learning algorithms is to get an optimal ontology function f: V

be a vector which is corresponding to a vertex v. For facilitating the expression, we slightly confuse the notations and denote v both the ontology vertex and its corresponding vector. The purpose of ontology learning algorithms is to get an optimal ontology function f: V![]()

![]() , then the similarity between two vertices is determined by the difference between two real numbers which they correspond to. The essence of such algorithm is dimensionality reduction, that is to say, use one dimension vector to represent p dimension vector. From this point of view, an ontology function f can be regarded as a dimensionality reduction map f:

, then the similarity between two vertices is determined by the difference between two real numbers which they correspond to. The essence of such algorithm is dimensionality reduction, that is to say, use one dimension vector to represent p dimension vector. From this point of view, an ontology function f can be regarded as a dimensionality reduction map f: ![]()

![]()

![]() .

.

In the real implement, the sparse ontology function can be denoted as

![]() =

= , (1)

, (1)

where ![]() =

=![]() is a sparse vector. For determining the ontology function f, we should get the sparse vector

is a sparse vector. For determining the ontology function f, we should get the sparse vector ![]() first.

first.

3. Main Ontology Algorithms

In this section, we present our main ontology sparse vector learning algorithms for ontology similarity measuring and ontology mapping by virtue of fused lasso signal approximator.

The one-dimensional fussed lasso signal approximator is defined by

![]()

![]() , (2)

, (2)

where ![]() =

=![]() . By introducing the auxiliary variables

. By introducing the auxiliary variables ![]() , i = 2,…, p, the following linearly constrained problem is trivially equivalent to (2),

, i = 2,…, p, the following linearly constrained problem is trivially equivalent to (2),

![]()

![]() ,

,

s. t. ![]() =

=![]() , i = 2,…, p.

, i = 2,…, p.

Set c>0, the augmented Lagrangian is defined by

![]() =

=![]()

![]() ,

,

where ![]() is the Lagrange multiplier.

is the Lagrange multiplier.

Considering the following saddle-point problem,

![]()

![]()

![]() ,

,  .(3)

.(3)

By the duality theory, ![]() is a solution of (2) if and only if

is a solution of (2) if and only if ![]() is a solution of (3) for some

is a solution of (3) for some ![]() and

and ![]() .

.

The popular algorithm for searching the saddle point is described as follows:

Initialize ![]() , arbitrarily.

, arbitrarily.

For k=1,2,…

![]() =

=![]()

![]() =

=![]() , i=2,…, p.

, i=2,…, p.

In general, it is difficult to minimize ![]() over

over ![]() and

and ![]() simultaneously, but it might be easier to minimize over

simultaneously, but it might be easier to minimize over ![]() when fixing

when fixing ![]() and vice versa. In this case, we can alternate these two steps until convergence. It turns out that we can update

and vice versa. In this case, we can alternate these two steps until convergence. It turns out that we can update ![]() and

and ![]() just once when the other is fixed, resulting in the following algorithm (raised by [23])

just once when the other is fixed, resulting in the following algorithm (raised by [23])

Initialize ![]() and

and ![]() , arbitrarily.

, arbitrarily.

For k=1, 2,…

![]() =

=![]() ,

,

![]() =

=![]() ,

,

![]() =

=![]() , i=2,…, p.

, i=2,…, p.

For example, apply the second algorithm to (2) with quadratic loss, the augmented Lagrangian is given by

![]() =

=![]()

![]()

![]() .

.

If ![]() =0, fixed

=0, fixed ![]() and

and![]() , the minimization over

, the minimization over ![]() is a quadratic problem and all components of

is a quadratic problem and all components of ![]() can be found simultaneously by solving a linear system

can be found simultaneously by solving a linear system ![]() .

.

For ![]() > 0, it is more difficult to update

> 0, it is more difficult to update ![]() directly. Fortunately, for quadratic loss, solution for FLSA with

directly. Fortunately, for quadratic loss, solution for FLSA with ![]() > 0 can be obtained by thresholding the solution for FLSA with

> 0 can be obtained by thresholding the solution for FLSA with ![]() = 0, and thus we only consider

= 0, and thus we only consider ![]() = 0.

= 0.

With ![]() =

=![]() and x=

and x=![]() fixed, the minimization over

fixed, the minimization over ![]() is a lasso regression with orthogonal design and thus we have the simple component-wise soft thresholding updating rule

is a lasso regression with orthogonal design and thus we have the simple component-wise soft thresholding updating rule

![]() =

=![]() , (4)

, (4)

where ![]() =

=![]() and

and ![]() expresses the positive part of a.

expresses the positive part of a.

For quadratic loss, the example shows that both update for ![]() and for

and for ![]() can be computed efficiently for

can be computed efficiently for ![]() = 0. However, for more general loss for

= 0. However, for more general loss for ![]() > 0, it is difficult to update β directly and thus in ontology implementation we do not use the first and the second Algorithms. By introducing another set of auxiliary variables

> 0, it is difficult to update β directly and thus in ontology implementation we do not use the first and the second Algorithms. By introducing another set of auxiliary variables ![]() , i=1,…,p, the optimizing problem (2) can be expressed as

, i=1,…,p, the optimizing problem (2) can be expressed as

![]()

![]() ,

,

s. t. ![]() , i =1,…, p.

, i =1,…, p.

![]() =

=![]() , j= 2,…, p.

, j= 2,…, p.

The corresponding (doubly) augmented Lagrangian is

![]() =

=![]()

![]()

![]() . (5)

. (5)

With the newly defined Lagrangian in (5), it can similarly modify the saddle-point problem (3) in an obvious way and it can be shown that the saddle-point problem is the same as the original FLSA problem (2). Accordingly, the following algorithms for finding the saddle point which directly extends the first Algorithm and the second Algorithm respectively (raised by [23]).

Initialize ![]() , arbitrarily.

, arbitrarily.

For k=1, 2,…

![]() =

=![]()

![]() =

=![]() , i=2,…, p,

, i=2,…, p,

![]() =

=![]() , i=1,…, p.

, i=1,…, p.

Initialize ![]() ,

, ![]() and

and ![]() , arbitrarily.

, arbitrarily.

For k=1, 2,…

![]() =

=![]()

![]() =

=![]() ,

,

![]() =

=![]() ,

,

![]() =

=![]() , i=2,…, p,

, i=2,…, p,

![]() =

=![]() , i=1,…, p.

, i=1,…, p.

4. Experiments

Two simulation experiments on ontology similarity measure and ontology mapping are designed in this section. For detail implement, we first obtain the optimal sparse vector using the algorithm raised in our paper, and then the ontology function is yielded by (1).

4.1. Experiment on Biology Data

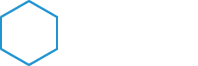

The "Go" ontology O1 was constructed by http: //www. geneontology. org. (Fig. 1 presents the graph structure of O1). We use P@N (defined by Craswell and Hawking [24]) to determine the equality of the experiment result.

Beside our ontology algorithm, ontology algorithms in Huang et al., [12], Gao and Liang [13] and Gao and Gao [14] are also acted to "Go" ontology. Then, we compare the precision ratio which we have deduced from the four tricks. Some parts of experiment results can be seen in Table 1.

Table 1. The experiment data for ontology similarity measure

| P@3 average precision ratio | P@5 average precision ratio | P@10 average precision ratio | P@20 average precision ratio | |

| Our Algorithm | 47.18% | 54.48% | 64.81% | 84.98% |

| Algorithm in [12] | 46.38% | 53.48% | 62.34% | 74.59% |

| Algorithm in [13] | 43.56% | 49.38% | 56.47% | 71.94% |

| Algorithm in [14] | 42.13% | 51.83% | 60.19% | 72.39% |

As we can see in the Table 1, when N= 3, 5, 10 or 20, the precision ratio in view of our algorithm is higher than that got by tricks which has been determined in Huang et al., [12], Gao and Liang [13] and Gao and Gao [14].

Figure 1. "Go" ontology

4.2. Experiment on Physical Education Data

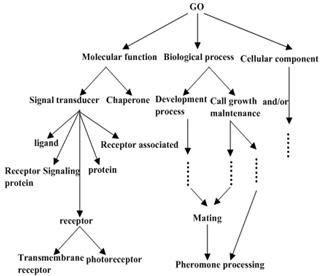

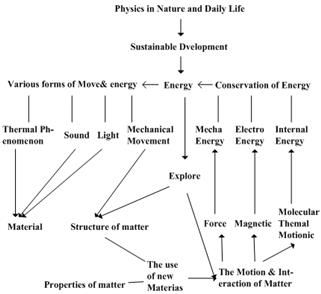

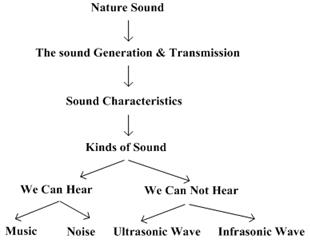

For our second experiment, we use physical education ontologies O2 and O3 (the graph structures of O2 and O3 are raised in Fig. 2 and Fig. 3 respectively). The purpose of this experiment is to construct the ontology mapping between O2 and O3. Again, P@N criterion is applied to measure the equality of the experiment results.

Furthermore, ontology technologies in Huang et al., [12], Gao and Liang [13] and Gao et al., [16] are employed to "physical education" ontology. At last, we compare the precision ratio that we have obtained from four tricks. Table 2 present several results for this experiment.

Figure 2. "Physical Education" Ontology O2

Figure 3. "Physical Education" Ontology O3.

Table 2. The experiment data for ontology mapping

| P@1 average precision ratio | P@3 average precision ratio | P@5 average precision ratio | |

| Our Algorithm | 67.74% | 78.49% | 90.32% |

| Algorithm in [12] | 61.29% | 73.12% | 79.35% |

| Algorithm in [13] | 69.13% | 75.56% | 84.52% |

| Algorithm in [16] | 67.74% | 77.42% | 89.68% |

From what we have obtained in Table 2, we find it more efficient to use our Algorithm than algorithms determined by Huang et al., [12], Gao and Liang [13] and Gao et al., [16], especially where N is sufficiently large.

5. Conclusions

In our article, a new algorithm for ontology similarity measure and ontology mapping application is presented by virtue of fused lasso signal approximator. Furthermore, experiment results reveal that our new algorithm has high efficiency in both biology and physics education. The ontology algorithm presented in our paper illustrates the promising application prospects for ontology use.

Acknowledgment

We thank the reviewers for their constructive comments in improving the quality of this paper. The research is financed by: NSFC (No.11401519), and the PhD initial funding of the second author.

References

- J. M. Przydzial, B. Bhhatarai, and A. Koleti, GPCR ontology: development and application of a G protein-coupled receptor pharmacology knowledge framework. Bioinformatics, 29(24) (2013) 3211-3219.

- S. Koehler, S. C. Doelken, and C. J. Mungall, The human phenotype ontology project: linking molecular biology and disease through phenotype data. Nucleic Acids Research, 42(D1)(2014) 966-974.

- M. Ivanovic and Z. Budimac, An overview of ontologies and data resources in medical domains. Expert Systerms and Applications, 41(11)(2014) 5158-15166.

- A. Hristoskova, V. Sakkalis, and G. Zacharioudakis, Ontology-driven monitoring of patient's vital signs enabling personalized medical detection and alert. Sensors, 14(1)(2014) 1598-1628.

- M. A. Kabir, J. Han, and J. Yu, User-centric social context information management: an ontology-based approach and platform. Personal and Ubiquitous Computing, 18(3)(2014) 1061-1083.

- Y. L. Ma, L. Liu, K. Lu, B. H. Jin, and X. J. Liu, A graph derivation based approach for measuring and comparing structural semantics of ontologies. IEEE Transactions on Knowledge and Data Engineering, 26(3)(2014) 1039-1052.

- Z. Li, H. S. Guo, Y. S. Yuan, and L. B. Sun, Ontology representation of online shopping customers knowledge in enterprise information. Applied Mechanics and Materials, 483(2014) 603-606.

- R. Santodomingo, S., Rohjans, M. Uslar, J. A. Rodriguez-Mondejar, and M.A. Sanz-Bobi, Ontology matching system for future energy smart grids. Engineering Applications of Artificial Intelligence, 32(2014) 242-257.

- T. Pizzuti, G. Mirabelli, M. A. Sanz-Bobi, and F. Gomez-Gonzalez, Food Track & Trace ontology for helping the food traceability control. Journal of Food Engineering, 120(1)(2014) 17-30.

- N. Lasierra, A. Alesanco, and J. Garcia, Designing an architecture for monitoring patients at home: Ontologies and web services for clinical and technical management integration. IEEE Journal of Biomedical and Health Informatics, 18(3)(2014) 896-906.

- Y. Y.Wang, W. Gao, Y. G. Zhang, and Y. Gao,Ontology similarity computation use ranking learning Method.The 3rd International Conference on Computational Intelligence and Industrial Application,Wuhan, China, 2010, pp.20-22.

- X.Huang, T. W. Xu, W. Gao, and Z. Y. Jia,Ontology similarity measure and ontology mapping via fast ranking method.International Journal of Applied Physics and Mathematics, 1(2011)54-59.

- W.Gao, and L.Liang,Ontology similarity measure by optimizing NDCG measure and application in physics education.Future Communication, Computing, Control and Management,142(2011)415-421.

- Y.Gao,and W.Gao,Ontology similarity measure and ontology mapping via learning optimization similarity function.International Journal of Machine Learning and Computing,2(2)(2012)107-112.

- X.Huang, T. W.Xu,W.Gao,and S.Gong,Ontology similarity measure and ontology mapping using half transductive ranking.In Proceedings of 2011 4th IEEE international conference on computer science and Information technology, Chengdu, China,2011, pp.571-574.

- W. Gao, Y. Gao, and L. Liang, Diffusion and harmonic analysis on hypergraph and application in ontology similarity measure and ontology mapping. Journal of Chemical and Pharmaceutical Research, 5(9)(2013) 592-598.

- W. Gao and L. Shi, Ontology similarity measure algorithm with operational cost and application in biology science. BioTechnology: An Indian Journal, 8(11)(2013) 1572-1577.

- W. Gao and T. W. Xu, Ontology similarity measuring and ontology mapping algorithm based on MEE criterion. Energy Education Science and Technology Part A: Energy Science and Research, 32(3)(2014) 3793-3806.

- W.Gao, Y. Gao, and Y. G. Zhang,Strong and weak stability of k-partite ranking algorithm.Information,15(11A)(2012)4585-4590.

- W.Gaoand T. W. Xu, Stability analysis of learning algorithms for ontology similarity computation.Abstract and Applied Analysis, 2013, 9 pages, http://dx.doi.org/10.1155/2013/174802.

- W. Gao and L. L. Zhu, Gradient learning algorithms for ontology computing. Computational Intelligence and Neuroscience, 2014, 12 pages, http://dx.doi.org/10.1155/2014/438291.

- W.Gao,L. Yan, and L. Liang,Piecewisefunctionapproximationand vertexpartitioningschemes formulti-dividingontologyalgorithm in AUCcriterionsetting (I).International Journal of Computer Applications in Technology, 50 (3/4)(2014)226-231.

- L. Wang, Y. You, H. Lian, A simple and efficient algorithm for fused lasso signal approximator with convex loss function, Comput Stat., (2013) 28:1699–1714.

- N. Craswell and D. Hawking, Overview of the TREC 2003 web track. Proceeding of the Twelfth Text Retrieval Conference, Gaithersburg, Maryland, NIST Special Publication, 2003, pp. 78-92.